-

Introducción a Snowflake

¿Qué es Snowflake y para qué se utiliza?, Snowflake AI Data Cloud, Arquitectura de Snowflake, Introducción a SnowSQL, Carga y gestión de datos, Creación de tablas y esquemas, Consultas básicas en SQL, Gestión de usuarios y roles, Configuración de virtual warehouses, Snowpark, Streamlit, Consejos para optimizar los costes y el rendimiento.

¿Qué es Snowflake y para qué se utiliza?, Snowflake AI Data Cloud, Arquitectura de Snowflake, Introducción a SnowSQL, Carga y gestión de datos, Creación de tablas y esquemas, Consultas básicas en SQL, Gestión de usuarios y roles, Configuración de virtual warehouses, Snowpark, Streamlit, Consejos para optimizar los costes y el rendimiento. -

Big Data: ISO 8000 y la calidad de los datos

Big Data: ISO 8000 y la calidad de los datos ¿Cuáles son las normas, estándares y marcos que nos ayudan a gestionar la calidad de los datos en entornos Big data?

Big Data: ISO 8000 y la calidad de los datos ¿Cuáles son las normas, estándares y marcos que nos ayudan a gestionar la calidad de los datos en entornos Big data? -

Goodbye 2022 Hello 2023

Un nuevo año comienza y repaso las palabras del 2022 más utilizadas dentro de mi documentación, un reflejo del trabajo realizado a lo largo del mismo.. El 2022 ha sido el año de los cursos de Python, orientados a Big Data (Hadoop, Spark) y Analytics (pandas, matplotlib y numpy). Tampoco han faltado los cursos de automatización con DevOps y el uso de GCP o Amazon Cloud.

Un nuevo año comienza y repaso las palabras del 2022 más utilizadas dentro de mi documentación, un reflejo del trabajo realizado a lo largo del mismo.. El 2022 ha sido el año de los cursos de Python, orientados a Big Data (Hadoop, Spark) y Analytics (pandas, matplotlib y numpy). Tampoco han faltado los cursos de automatización con DevOps y el uso de GCP o Amazon Cloud. -

Fundamentos de Big Data

Libro Fundamentos de Big Data. Comparto un Jupyter Book que he realizado con los apuntes elaborados para el curso de Fundamentos de Big Data. Dentro del temario se ha visto una introducción al Big Data y al análisis de datos, Mercado y tendencias, su historia, Ejemplos de casos de usos, Buenas prácticas, y Procesos de Big Data entre otras cosas.

Libro Fundamentos de Big Data. Comparto un Jupyter Book que he realizado con los apuntes elaborados para el curso de Fundamentos de Big Data. Dentro del temario se ha visto una introducción al Big Data y al análisis de datos, Mercado y tendencias, su historia, Ejemplos de casos de usos, Buenas prácticas, y Procesos de Big Data entre otras cosas. -

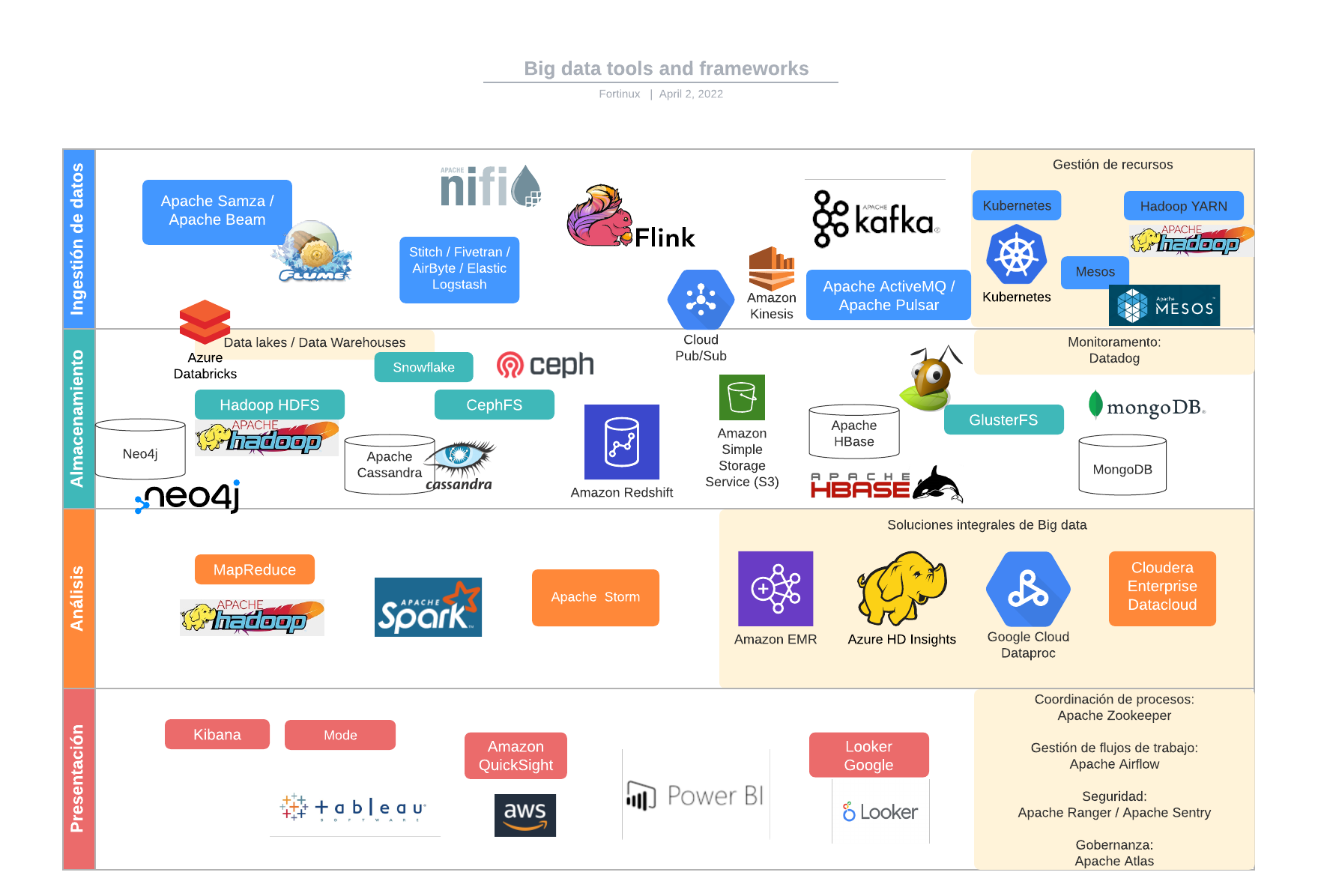

Big Data Fundamentals, Part II

I’m sharing Big Data Fundamentals, Part II, (Part I is here) with an introduction to Big Data covering: Big Data processes: ingest, store, process/query, visualize; tools and technologies: Hadoop, Sqoop, Kafka, Mesos, Redis, CouchDB; Document stores: MongoDB; Column stores: HBase + Cassandra; Big Data analytics: Spark, Storm; and Elastic Stack: Logstash, ElasticSearch and Kibana.

We’ll see also Machine learning techniques with Spark (MLlib, Streaming) and TensorFlow.

08004 – Barcelona

Cataluña – España

info@fortinux.com

SLA 24 hs. Soporte Online

0034 – 644 79 25 79

Lun – Vie 9:00 AM a 6:00PM